Product design: review systems

Designing of the "unexpected project" is taking longer than anticipated, but not all for wrong reasons (besides one day off to rest). I've came up with idea of adding browser extensions and that alone took a solid day extra. And there was a part of the UI on the main website that I was so unsatisfied with that I had to take a day to figure it out. Interestingly, inspiration struck me while thinking about Steam gaming platform.

The problem was in regards to defining a review system: how to allow for user feedback and still be able to derive some structure and statistics out of it.

The most common kind of reviews are those for online shops and movies. They put a star-based rating ("out of 5" or "out of 10") and allow for user to write any additional feedback/comments. This is totally sufficient for those use cases because the type of users that consume that comment require only that kind of data. It is interesting how we consume that kind of data:

- you check the aggregate score

- if the score is bad, it probably means that something is really off with it, and most of people won't bother further

- if the score is within the higher quality range of interest - great

- if the score on the boundary

- check several individual reviews to understand why they gave such score

- try to derive a conclusion whether your expectation could satisfied or not (e.g. "okay, I will watch the movie even though some people say that it's a bit slow, but they say it also has a nice twist")

Interesting is also why reviews for such things are allowed to be so unstructured and mostly rely on a single metric such as the number of stars. I'd say it's because the number of parameters by which the content could've been measured is too large, and in most cases, relative importance of those parameters in comparison to one another is too small to then split the entire review into each parameter individually justifiably, as it would only create an additional overhead without too much value in return. For example, for movies, you could rate the movie by the quality of performance, quality of VFX, soundtrack, storytelling, camera-work, ... but for most people that visit movie database websites, it's only important to understand whether a movie could be a decent, enjoyable experience or not, a that is represented well enough by a simple number of stars. Also, in the case of online shops, you could rate the product by quality, price, delivery time, ease of use, quality of customer service,... but in the end, for most people, it's only important if they practically got what the product description stated and are generally satisfied with it or not.

Some websites do require more detailed breakdowns of reviews. Perhaps, even a specialized movie database website for professional critics could be interested in implementing a more detailed review system, such as the one described above. Again, it's up to the profile of the users and goals of the website, what its purpose is and what kind of dynamics does it want to achieve.

So, for any case where reviews need to be more structured and detailed, breaking them into as many important parameters is a way to go. However, there's still a choice to be made in regards to which kind of scoring system for each of the parameters to pick. Do you just let users choose between "good/bad" or do you put a "out of 10" rating, or something else? Do parameters have some default values or are some of them completely optional?

By having too many options for a single parameter, you might create "the paradox of choice". But by offering too few options, you lose the potential precision and continuity in the data. And any additional rule to to the mechanics of the review system creates an overhead. And choices for those ultimately depend on user profiles, type of content and goals of the website.

Another interesting thing is controlling the reviews and making sure that malicious intents cannot flood the system, i.e. stop the "review bombing", spamming, and abuse. For online stores (for both physical goods or virtual), you can verify that user has bought/installed the product and bombing is not an issue simply because in order to spam, one would have to go through the entire process of installing the software or spending money to buy it, which is not a large concern. For movies, users have to be logged in and usually bombing is not an issue, simply because the risk is very low and it's rare that somebody has the motive big enough for them to bother.

So, good options are limiting allowed reviews per some unit of time, verifying users directly, verifying their emails, verifying their connection to the content being reviewed (e.g. "they did but the product") or building some good heuristics to detect these kind of malicious behaviors and put some defense mechanism in place (ban a user from platform when abusing it; Twitter is an example).

It should be obvious that precautions against this kind of behavior scale proportionally with the amount of sacrifice/cost an individual user has to make (or in rare cases, a collective has to) and importance of the type of content that is on the platform.

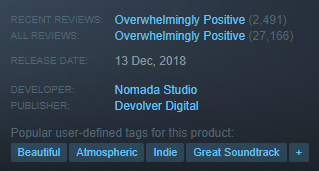

So why was I thinking about Steam? Because I recalled their "user-defined tags" section:

To me, interesting was the choice of "structuring the unstructured data" by almost letting users define the parameters of the reviews themselves - they pick what the product represents for each of them individually, and the most common interpretation will rise up to the top. But still, users have to use predefined tags, and that is sufficient for website to use that data practically (i.e. summarise it by showing the most common tags first) and by combining it with a separate measure of satisfaction (it's just an "upvote/downvote" whose aggregate is then also first shown in some summarised manner such as "Overwhelmingly Positive"), it does well at representing what are the things that gamers care about when considering buying the game.